From Lab to Algorithm: How AI is Revolutionizing Electrochemical Interface Design for Biomedical Research

This article explores the transformative role of artificial intelligence (AI) in designing and optimizing electrochemical interfaces for biomedical applications.

From Lab to Algorithm: How AI is Revolutionizing Electrochemical Interface Design for Biomedical Research

Abstract

This article explores the transformative role of artificial intelligence (AI) in designing and optimizing electrochemical interfaces for biomedical applications. We first establish the foundational principles of electrochemistry at the bio-nano interface and the core AI/ML paradigms employed. We then detail the methodological pipeline, from data generation and model training to applications in biosensor and drug delivery system design. Key challenges, including data scarcity and model interpretability, are addressed alongside proven optimization strategies. Finally, we present a critical analysis of validation protocols, benchmark AI models, and compare AI-driven approaches against traditional experimental methods. This comprehensive guide provides researchers and drug development professionals with actionable insights for integrating AI into their electrochemical R&D workflows.

The AI-Electrochemistry Nexus: Core Concepts for Biomedical Interface Design

Application Notes

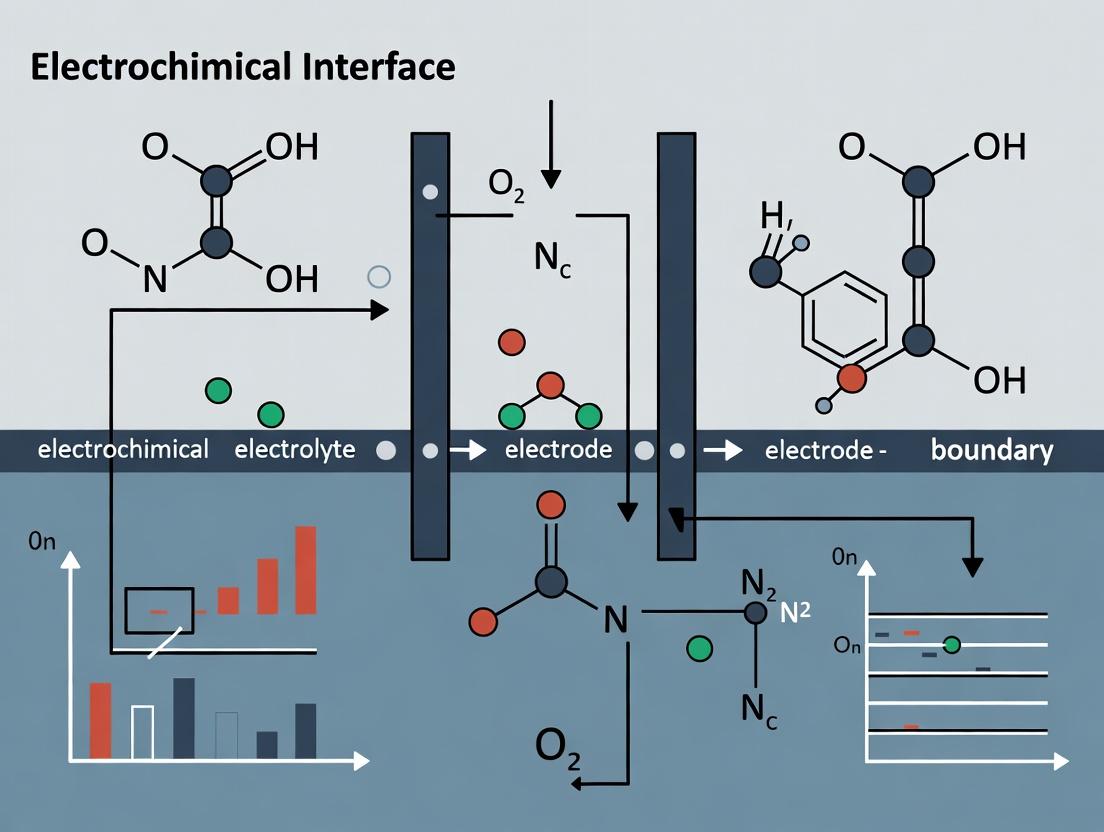

The rational design of the electrochemical interface (EI)—the critical region where electrode, electrolyte, and biological element meet—is paramount for advancing biosensor fidelity and targeted therapeutic efficacy. The integration of Artificial Intelligence (AI) and Machine Learning (ML) into this design process represents a paradigm shift, enabling the prediction of optimal material compositions, surface architectures, and signal transduction mechanisms. This approach directly addresses key challenges: non-specific adsorption (fouling), heterogeneous electron transfer kinetics, and the stability of biorecognition elements in complex biological matrices.

In biosensing, AI-driven multivariate analysis of impedance spectra can deconvolute specific binding signals from background noise, pushing detection limits toward single-molecule levels. For therapeutics, AI-optimized conductive scaffolds and nano-carriers allow for precise spatiotemporal control of electro-responsive drug release or electrogenic cell stimulation. The following protocols and data illustrate concrete applications within this AI-driven research framework.

Experimental Protocols

Protocol 1: AI-Optimized Deposition of Anti-fouling Nanocomposite Coatings for Implantable Glucose Sensors

Objective: To electrodeposit a graphene oxide / zwitterionic polymer nanocomposite coating on a platinum microelectrode, where the deposition parameters are optimized by a neural network to maximize glucose oxidase activity and minimize bovine serum albumin (BSA) adsorption.

Materials: See "Research Reagent Solutions" table.

Method:

- Electrode Pretreatment: Clean Pt working electrode (WE) via cyclic voltammetry (CV) from -0.2 V to +1.2 V vs. Ag/AgCl in 0.5 M H₂SO₄ for 20 cycles. Rinse with DI water.

- Dispersion Preparation: Sonicate 1 mg/mL GO in PBS for 1 hour. Add CBMA monomer to a final concentration of 5 mM.

- AI-Parameter Optimization: Input target metrics (high current, low fouling) into a pre-trained convolutional neural network (CNN). The CNN outputs optimal deposition parameters: Potential = -1.1 V, Duration = 120 s, GO:CBMA ratio = 1:5.

- Electrodeposition: In a three-electrode cell with the pretreated Pt WE, perform chronoamperometry at -1.1 V for 120 s in the GO/CBMA dispersion under N₂ atmosphere.

- Enzyme Immobilization: Immerse coated electrode in 10 mg/mL GOx solution (in 0.1 M PBS, pH 7.4) for 12 hours at 4°C. Rinse gently.

- Performance Validation: Characterize via CV in 5 mM [Fe(CN)₆]³⁻/⁴⁻. Test amperometric response to 5 mM glucose at +0.6 V. Assess fouling by measuring charge transfer resistance (Rₑₜ) via Electrochemical Impedance Spectroscopy (EIS) before and after 1-hour immersion in 10 mg/mL BSA solution.

Protocol 2: Electrochemically Triggered Release from ML-Designed Conductive Hydrogels

Objective: To synthesize and characterize a polyaniline-alginate hydrogel for on-demand drug release, where the formulation is predicted by a gradient boosting model to achieve a specific release profile upon electrochemical reduction.

Materials: See "Research Reagent Solutions" table.

Method:

- ML-Driven Formulation: Input desired release properties (80% payload release at -0.5V within 10 min) into a gradient boosting regressor. The model specifies: Alginate concentration = 2% w/v, Aniline concentration = 0.3 M, Crosslinker (CaCl₂) concentration = 0.1 M.

- Hydrogel Synthesis: Dissolve sodium alginate in DI water. Mix with aniline monomer and dissolved model drug (e.g., fluorescein). Add ammonium persulfate (APS) as initiator (0.25 M final conc.). Pour mixture into mold and add CaCl₂ solution to ionically crosslink alginate while aniline polymerizes. Allow to set for 2 hours.

- Electrochemical Release Setup: Integrate the hydrogel as a coating on a carbon felt electrode in a flow-cell system. Use Pt counter and Ag/AgCl reference electrodes. Use PBS (pH 7.4, 0.1 M) as electrolyte.

- Triggered Release: Apply a reductive potential step to the working electrode from +0.2 V to -0.5 V for 600 seconds. The reduction of polyaniline causes a local pH increase and hydrogel swelling, releasing the encapsulated drug.

- Quantification: Collect effluent from the flow cell. Quantify released drug concentration using UV-Vis spectroscopy (for fluorescein, measure absorbance at 494 nm) or HPLC at 30-second intervals.

Data Presentation

Table 1: Performance Comparison of AI-Optimized vs. Traditionally Designed Electrochemical Interfaces

| Parameter | AI-Optimized Glucose Sensor (GO/CBMA/GOx) | Conventional Sensor (Nafion/GOx) | Unit |

|---|---|---|---|

| Response Time (t₉₅) | 1.8 | 4.5 | s |

| Sensitivity | 45.2 | 28.7 | µA/mM·cm² |

| Linear Range | 0.01-30 | 0.1-25 | mM |

| Fouling (ΔRₑₜ after BSA) | +15% | +120% | - |

| Operational Stability (7d) | 92% | 75% | % Initial Signal |

Table 2: Electrochemically Triggered Drug Release from ML-Designed Hydrogels

| Applied Potential (V vs. Ag/AgCl) | Cumulative Release at 5 min (%) | Cumulative Release at 10 min (%) | Swelling Ratio (%) |

|---|---|---|---|

| +0.2 (Oxidized, No Trigger) | 2.1 | 3.5 | 105 |

| -0.3 | 35 | 62 | 180 |

| -0.5 | 68 | 89 | 320 |

| -0.7 | 72 | 94 | 350 |

Visualizations

Title: AI-Driven Electrochemical Interface Design Workflow

Title: AI-Enhanced Signal Acquisition in Complex Media

The Scientist's Toolkit: Research Reagent Solutions

| Item (Supplier Example) | Function in EI Design |

|---|---|

| Graphene Oxide (GO) Dispersion (Sigma-Aldrich, 777676) | Provides high surface area conductive foundation; carboxyl groups enable biomolecule conjugation. |

| Carboxybetaine Methacrylate (CBMA) Monomer (BroadPharm, BP-11297) | Zwitterionic monomer for electrophoretic co-deposition; creates a hydrophilic, anti-fouling surface. |

| Glucose Oxidase (GOx) from A. niger (Sigma-Aldrich, G7141) | Model biorecognition enzyme for biosensing protocols; catalyzes glucose oxidation. |

| Polyaniline (PANI) Emeraldine Salt (MilliporeSigma, 428329) | Conducting polymer backbone for redox-active hydrogels; enables electrochemically triggered swelling. |

| Sodium Alginate (High G-Content) (Alfa Aesar, A11188) | Polysaccharide for hydrogel formation; provides biocompatibility and ionic cross-linking sites. |

| Phosphate Buffered Saline (PBS), 10X, Bioreagent (Thermo Fisher, AM9624) | Standard physiological buffer for electrochemical testing in biosimulating conditions. |

| Hexaammineruthenium(III) Chloride (Strem Chemicals, 44-0050) | Outer-sphere redox probe for unperturbed evaluation of electrode kinetics and active area. |

| Potassium Ferricyanide/Ferrocyanide (Sigma-Aldrich, 60279/60299) | Common inner-sphere redox couple for general characterization of electrode surface properties. |

Why AI Now? The Data Bottleneck and Complexity of Bio-Nano Systems.

The integration of artificial intelligence (AI) into the design of bio-nano electrochemical interfaces emerges not merely as a trend but as a necessary paradigm shift. The central thesis of our research posits that AI-driven design is the only scalable methodology to overcome the twin challenges of immense combinatorial complexity and severe experimental data scarcity. This document provides application notes and protocols for implementing this approach.

The Data Bottleneck: Quantitative Analysis

The design space for bio-nano electrochemical systems is vast, defined by high-dimensional parameters. Experimental throughput is fundamentally limited, creating a critical bottleneck.

Table 1: The Experimental Data Bottleneck in Bio-Nano Interface Development

| Parameter Dimension | Typical Range/Variants | Experimental Throughput (Traditional) | Time to Exhaustively Test (Est.) | AI-Driven Screening (Virtual) |

|---|---|---|---|---|

| Nanoparticle Core | Au, Ag, Pt, Pd, Fe3O4, SiO2, etc. (10+ types) | ~3-5 syntheses/day | > 100 days | > 10^5 candidates/hour |

| Core Size & Shape | 5nm, 10nm, 20nm, 50nm, rods, stars, spheres | ~2-3 characterizations/day | > 60 days | Instant parameter variation |

| Surface Ligand | PEG, peptides, DNA, small molecules, polymers (1000s) | ~10-20 functionalizations/week | > 10 years | Library generation via SMILES |

| Biorecognition Element | Antibody, aptamer, enzyme, protein G (with variants) | ~5-10 conjugations/week | > 1 year | Docking & affinity prediction |

| Electrode Surface Mod. | SAMs, polymers, hydrogels, nanostructures | ~5-10 fabrications/week | > 6 months | Molecular dynamics simulation |

This table illustrates the impossibility of brute-force exploration. AI models, particularly generative and graph neural networks, learn from sparse experimental data to predict the performance of unseen combinations, guiding synthesis toward optimal regions of the design space.

Protocol: Generating a Training Dataset for AI-Driven Sensor Design

Aim: To produce a standardized, high-quality dataset linking bio-nano probe design parameters to electrochemical performance metrics for AI model training.

Materials & Reagents:

- Nanoparticle Seeds: Chloroauric acid (HAuCl4), Silver nitrate (AgNO3).

- Reducing/Capping Agents: Trisodium citrate, Sodium borohydride (NaBH4), Ascorbic acid.

- Surface Ligands: Methoxy-PEG-thiol (MW: 2000 Da), Carboxyl-PEG-thiol (MW: 3000 Da).

- Biomolecules: Lysozyme binding DNA aptamer (thiol-modified), Anti-CRP antibody (clone C6).

- Coupling Reagents: 1-ethyl-3-(3-dimethylaminopropyl)carbodiimide (EDC), N-hydroxysuccinimide (NHS).

- Electrochemical Setup: Screen-printed carbon electrodes (SPCEs), Potentiostat (e.g., PalmSens4), Ferri/ferrocyanide redox probe ([Fe(CN)6]3−/4−).

- Buffers: Phosphate Buffered Saline (PBS, 0.01M, pH 7.4), 2-(N-morpholino)ethanesulfonic acid (MES, 0.1M, pH 6.0).

Procedure:

- Parametric Synthesis: Systematically vary one parameter per batch (e.g., AuNP diameter: 10, 20, 40 nm) using a modified Turkevich-Frens method. Hold all others constant.

- Functionalization: Purify NPs via centrifugation. Incubate with a gradient of PEG-thiol densities (10%, 50%, 100% saturation) for 2h at 25°C. Purify again.

- Bioconjugation:

- For aptamers: Directly incubate thiolated aptamer with AuNPs for 16h at 4°C.

- For antibodies: Activate carboxyl-PEG NPs with fresh EDC/NHS in MES buffer for 15 min. React with antibody amine groups (10 µg/mL) for 2h. Block with 1% BSA.

- Electrode Modification: Drop-cast 5 µL of each bio-nano conjugate variant onto separate SPCEs. Dry under N2.

- Electrochemical Characterization: a. Impedance (EIS): Measure in 5mM [Fe(CN)6]3−/4− / 0.1M KCl. Parameters: DC potential = 0.22V (vs. Ag/AgCl), amplitude = 10mV, frequency range = 0.1Hz–100kHz. Extract charge transfer resistance (Rct). b. Cyclic Voltammetry (CV): Scan from -0.1V to 0.5V at 50mV/s. Extract peak current (Ip) and peak separation (ΔEp).

- Biosensing Test: Immerse modified electrodes in PBS with a target analyte (e.g., 0, 10, 100, 1000 ng/mL CRP). Incubate 15 min. Re-measure EIS. Calculate ΔRct/Rct_initial (%).

- Data Curation: For each variant (row), compile features: [Coresize, Corematerial, Liganddensity, Bioelement, Conjugationchemistry] and labels: [Rctinitial, Ip, ΔEp, Sensitivity (%/decade), LOD]. Store in a structured CSV file.

Visualization: AI-Driven Design Workflow

Title: AI-Driven Closed-Loop Design for Bio-Nano Interfaces

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for AI-Informed Bio-Nano Electrochemistry

| Reagent / Material | Supplier Examples | Function & Relevance to AI Integration |

|---|---|---|

| Functionalized Gold Nanoparticles | Cytodiagnostics, NanoComposix | Standardized Cores. Provide reproducible starting points (size, shape, surface) for generating consistent training data. |

| PEG Thiol Heterobifunctional Linkers | Creative PEGWorks, Iris Biotech | Controlled Interface Engineering. Enable systematic variation of spacer length and terminal groups (-COOH, -NH2, -MAL) as modelable design parameters. |

| Thiol-Modified DNA Aptamers | Integrated DNA Tech., BasePair Biotech | Programmable Recognition. Sequence-defined biorecognition element; sequences can be encoded as inputs for deep learning models. |

| Screen-Printed Electrode Arrays | Metrohm DropSens, BioLogic Science | High-Throughput Testing. Allow parallel acquisition of electrochemical data (EIS, CV) to rapidly populate datasets. |

| EDC / NHS Coupling Kits | Thermo Fisher, Abcam | Reliable Bioconjugation. Ensure consistent, high-yield attachment of biomolecules, reducing experimental noise in training data. |

| Bench-Stable Redox Probes | GAMRY Instruments | Standardized Readout. Provide consistent electrochemical signals for label-free characterization of interfacial modifications. |

This document provides foundational protocols for applying machine learning (ML) within AI-driven electrochemical interface design research. The overarching thesis posits that integrating ML—from simple regression to advanced graph neural networks (GNNs)—can dramatically accelerate the discovery and optimization of electrochemical interfaces for applications in sensing, energy storage, and electrocatalysis, with direct relevance to pharmaceutical development (e.g., biosensor design).

Foundational ML Models: Protocols & Application Notes

Linear & Polynomial Regression for Tafel Analysis

- Objective: Quantify the relationship between overpotential (η) and current density (j) to extract kinetic parameters (exchange current density j₀, Tafel slope).

Protocol:

- Data Acquisition: Perform steady-state polarization measurements. Collect data pairs (η, log|j|) from the Tafel region (typically |η| > 50 mV from open circuit).

- Preprocessing: Apply log-transform to the absolute current density:

y = log10(|j|). Feature (x) is overpotential η. - Model Training:

- Linear Regression: Fit

y = a * η + b. Tafel slope =1/a, log(j₀) =b. - Polynomial Regression (2nd order): Fit

y = p2 * η² + p1 * η + p0to account for minor deviations from ideal kinetics.

- Linear Regression: Fit

- Validation: Use k-fold cross-validation (k=5) to assess model stability. Report R² score and mean absolute error (MAE) on a held-out test set (20% of data).

Quantitative Data Summary: Table 1: Performance of Regression Models on Simulated Tafel Data (j₀=1e-6 A/cm², Tafel slope=120 mV/dec)

Model Type Test R² Score MAE in log(j) Extracted j₀ (A/cm²) Extracted Tafel Slope (mV/dec) Linear 0.992 0.015 9.8e-7 118.5 Polynomial 0.998 0.007 1.02e-6 119.8

Support Vector Machines (SVM) for Phase Classification

- Objective: Classify the dominant surface phase (e.g., OH, O, clean) from in-situ spectroscopic or cyclic voltammetry fingerprints.

- Protocol:

- Dataset Curation: Assemble labeled data. Each sample is a feature vector (e.g., intensities at key wavenumbers, or current values at specific potentials from a CV cycle). Labels are pre-identified phases.

- Feature Scaling: Standardize features by removing the mean and scaling to unit variance using

StandardScaler. - Model Training: Train a C-Support Vector Classification model with a radial basis function (RBF) kernel. Optimize hyperparameters

C(regularization) andgamma(kernel width) via grid search. - Evaluation: Report classification accuracy, precision, and recall on a stratified test set. Visualize decision boundaries using PCA for reduced dimensions.

Advanced Architectures: Graph Neural Networks for Molecular Interface Design

Rationale

GNNs operate directly on graph representations of molecules, where atoms are nodes and bonds are edges. This is ideal for predicting molecular properties relevant to electrochemical interfaces, such as adsorption energy, redox potential, or catalytic activity, supporting the design of new organic electrolytes or electrocatalyst molecules.

Protocol: Predicting Adsorption Energy on a Model Catalyst Surface

- Objective: Train a GNN to predict the adsorption energy (ΔE_ads in eV) of small organic molecules onto a Pt(111) slab model.

Data: Use a public dataset (e.g., OC20, or a custom DFT-calculated set). Each sample is a molecule represented as a graph with node features (atomic number, formal charge) and edge features (bond type, distance).

- Graph Construction:

- Nodes: Each atom. Features: one-hot encoded atomic number (H, C, O, N), hybridization state.

- Edges: Connect atoms if interatomic distance < 2 Å. Features: one-hot encoded bond type (single, double, triple, aromatic).

- Model Architecture: Implement a Message Passing Neural Network (MPNN).

- Message Passing Steps (3 rounds): Each node aggregates features from its neighbors.

- Readout Phase: Global mean pooling of all node embeddings to create a fixed-size molecular fingerprint.

- Regression Head: Two fully connected layers map the fingerprint to a scalar ΔE_ads prediction.

- Training: Use Mean Squared Error (MSE) loss with the Adam optimizer. Employ a 70/15/15 train/validation/test split. Monitor validation loss for early stopping.

- Graph Construction:

Quantitative Data Summary: Table 2: GNN Performance vs. Baseline Models on Adsorption Energy Prediction

Model Test Set MAE (eV) Test Set RMSE (eV) Training Time (min) Key Advantage Linear Ridge (on Morgan Fingerprints) 0.48 0.62 2 Baseline Random Forest (on Morgan Fingerprints) 0.35 0.47 5 Non-linear GNN (MPNN) 0.21 0.29 45 Learns structure-property relationship directly

Visualization of Workflows

Title: AI-Driven Electrochemical Interface Design Workflow

Title: GNN Protocol for Adsorption Energy Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational & Data Resources for ML in Electrochemistry

| Item / Resource | Function in ML-Driven Research | Example / Format |

|---|---|---|

| Electrochemical Dataset (Structured) | Clean, annotated data for model training/validation. Requires overpotential, current, time, electrode material, electrolyte. | CSV, HDF5 files with metadata. |

| Molecular Representation | Converts molecular structures into machine-readable format for GNNs or fingerprint models. | SMILES string, .xyz coordinate file, RDKit molecule object. |

| Density Functional Theory (DFT) Software | Generates high-quality training labels (energies, electronic properties) for surrogate model development. | VASP, Quantum ESPRESSO, Gaussian. |

| ML Framework & Libraries | Provides tools to build, train, and evaluate models from regression to GNNs. | Python with Scikit-learn, PyTorch, PyTorch Geometric, Deep Graph Library (DGL). |

| Automated Featurization Pipelines | Transforms raw data (spectra, CVs) into consistent feature vectors for classical ML. | scikit-learn Pipeline with StandardScaler, custom electrochemical descriptors. |

| Hyperparameter Optimization (HPO) Tool | Automates the search for optimal model parameters to maximize predictive performance. | GridSearchCV (scikit-learn), Optuna, Ray Tune. |

| Visualization Suite | For interpreting model decisions, visualizing molecular embeddings, and plotting structure-property relationships. | Matplotlib, Seaborn, Plotly, t-SNE/UMAP for dimensionality reduction. |

Key Datasets and Material Libraries for AI-Driven Discovery (e.g., Materials Project, EC-Data)

Within a broader thesis on AI-driven electrochemical interface design research, the selection and utilization of high-quality, curated data repositories is foundational. These datasets and material libraries serve as the training grounds for machine learning models, the sources for descriptor generation, and the benchmarks for predicting novel materials with optimized properties for electrocatalysis, energy storage, and sensor development. This document details the key resources and protocols for their application.

The following table summarizes the primary repositories used in AI for materials and electrochemistry discovery.

Table 1: Core Datasets and Libraries for AI-Driven Electrochemical Discovery

| Repository Name | Primary Focus | Data Type & Volume | Key Electrochemical Relevance | Access |

|---|---|---|---|---|

| Materials Project (MP) | Inorganic bulk crystals | >150,000 materials; DFT-calculated properties (formation energy, band gap, elasticity, etc.). | Screening for electrocatalyst stability, bulk conductivity, anode/cathode materials. | REST API, GUI (materialsproject.org) |

| EC-Data (Electrochemistry Data) | Experimental electrochemistry | >1.5 million cyclic voltammograms; experimental conditions, electrode materials, solvent/electrolyte. | Training models on real electrochemical signatures; benchmarking predictions. | REST API, Python client (ec-data.org) |

| NOMAD Repository & AI Toolkit | Computational materials science | >200 million calculations (energies, forces, spectra). | Large-scale training for quantum-accurate models of interfacial phenomena. | API, Oasis platform (nomad-lab.eu) |

| Cambridge Structural Database (CSD) | Organic/metal-organic crystals | >1.2 million experimentally-determined crystal structures. | Molecular electrocatalyst design, proton-coupled electron transfer, ligand effects. | Commercial (ccdc.cam.ac.uk) |

| Catalysis-Hub | Surface catalysis data | Surface reaction energies & barriers for ~100,000 reactions. | Microkinetic modeling of electrocatalytic pathways (HER, OER, CO2RR, NRR). | REST API (www.catalysis-hub.org) |

| BatteryDEV | Battery cycle life & performance | Electrochemical cycling data for >40,000 cells under varied protocols. | AI for electrolyte formulation, failure prediction, and fast-charging protocol design. | Web platform (batterydev.org) |

Application Notes and Experimental Protocols

Protocol 3.1: Screening for Stable OER Electrocatalysts Using the Materials Project

Objective: To identify novel, stable oxide-based catalysts for the Oxygen Evolution Reaction (OER) in acidic media. Workflow Diagram Title: AI-Driven Catalyst Screening Workflow

Procedure:

- Query Setup: Use the

mp-apiPython client. Define a search for oxides containing 3d/4d/5d transition metals.

- Data Retrieval: For each resulting material ID, fetch the structure (

CIFfile), formation energy (formation_energy_per_atom), and band gap (band_gap). - Stability Filtering: Retain only materials with a negative formation energy and an energy above hull < 0.1 eV/atom.

- Descriptor Generation: Use the

matminerlibrary to generate feature vectors (e.g.,ElementProperty,StructuralHeterogeneity). - ML Prediction: Load a pre-trained graph neural network (e.g., from

CGCNNorMEGNet) or train a model on MP-derived OER data from Catalysis-Hub to predict theoretical overpotential. - Output: Generate a ranked table of candidate materials with predicted stability and activity metrics.

Protocol 3.2: Validating AI Predictions with Experimental EC-Data

Objective: To benchmark a model's prediction of a voltammetric response for a proposed catalyst by comparing it to analogous experimental data in EC-Data. Workflow Diagram Title: Experimental Validation Loop with EC-Data

Procedure:

- Query Formulation: After an AI model suggests a novel molecular catalyst (e.g., a Fe-porphyrin derivative), search EC-Data for similar compounds.

- Data Retrieval and Parsing: Download the

.jsondata for relevant experiments. Extract key experimental parameters: scan rate, electrolyte, working electrode, and thecurrent_potentialarrays. - Feature Alignment: Normalize current by scan rate and electrode area. Align potential axis to a common reference (e.g., Fc/Fc+).

- Comparison Metrics: Calculate the mean absolute error (MAE) between predicted peak potentials (from DFT/ML) and experimental peaks from analogous structures. Analyze shape correlation using cross-correlation.

- Model Refinement: If discrepancy > 50 mV, use the experimental data from EC-Data as additional training data in a transfer learning step to fine-tune the predictive model.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital and Physical Research Tools

| Item/Category | Example/Specific Product | Function in AI-Driven Discovery |

|---|---|---|

| Computational Environment | Google Colab Pro, VSCode with Python Kernel | Provides GPU access and IDE for running ML training scripts and data analysis. |

| Core Python Libraries | pymatgen, matminer, scikit-learn, pytorch |

Enables manipulation of crystal structures, feature extraction, and building neural networks. |

| Database Clients | mp-api (Materials Project), ecdata-client (EC-Data) |

Programmatic access to query and download datasets directly into analysis workflows. |

| Quantum Chemistry Software | VASP, Gaussian, ORCA |

Performs first-principles calculations to generate new data for training or validation. |

| Reference Electrode | CH Instruments Ag/AgCl (3M KCl) | Provides stable potential reference in experimental validation of predicted materials. |

| Electrolyte | 0.1 M TBAPF6 in anhydrous acetonitrile | Standard, well-characterized non-aqueous electrolyte for benchmarking molecular electrocatalysts. |

| Working Electrode | Glassy Carbon electrode (3 mm diameter) | Standardized, reproducible surface for initial electrochemical characterization of new materials. |

| Data Analysis Suite | EC-Lab (BioLogic), GPES (Eco Chemie) |

Professional software for processing and analyzing raw experimental electrochemical data files. |

Seminal Papers and Recent Breakthroughs in AI-Augmented Electrochemistry

Application Notes

AI-augmented electrochemistry represents a paradigm shift in the design, analysis, and optimization of electrochemical systems. Within the broader thesis of AI-driven electrochemical interface design, these tools enable the prediction of material properties, the autonomous optimization of experimental parameters, and the discovery of novel electrocatalysts and sensing platforms with applications from energy storage to pharmaceutical analysis.

Core Application Areas:

- Autonomous Experimentation: Closed-loop systems where AI algorithms (e.g., Bayesian Optimization, Gaussian Processes) analyze experimental data in real-time and decide the next optimal experiment to perform, drastically accelerating the search for high-performance electrode materials or optimal drug detection conditions.

- Inverse Design: Generative models and conditional variational autoencoders (CVAEs) are used to design molecular structures or material compositions with targeted electrochemical properties (e.g., a specific redox potential or high catalytic activity for a drug metabolite).

- Enhanced Data Analysis: Machine Learning (ML) models, particularly convolutional neural networks (CNNs), deconvolute complex signals in techniques like voltammetry, separating overlapping peaks and extracting meaningful thermodynamic and kinetic parameters with greater accuracy than traditional methods.

- Multiscale Simulation Bridge: AI/ML acts as a surrogate for computationally expensive density functional theory (DFT) or molecular dynamics (MD) simulations, predicting properties like adsorption energies or electron transfer rates, enabling high-throughput screening.

The following table summarizes quantitative findings from foundational and cutting-edge research.

Table 1: Key Papers in AI-Augmented Electrochemistry

| Reference | Core AI/ML Method | Electrochemical System/Goal | Key Quantitative Outcome | Impact on Interface Design Thesis |

|---|---|---|---|---|

| Luntz & Voss, 2019J. Phys. Chem. Lett. | Bayesian Optimization (BO) | Optimization of Cu-based electrocatalyst for CO₂ reduction to C₂+ products. | BO identified optimal electrolyte composition and potential in ~50 experiments, vs. ~1000 for grid search. Feasible Faradaic efficiency > 65%. | Demonstrated autonomous navigation of complex, multi-variable electrochemical parameter space for interface optimization. |

| Gómez-Bombarelli et al., 2018ACS Cent. Sci. | Variational Autoencoder (VAE) + DFT | Generative design of organic molecules for redox flow batteries. | Model generated 69k stable molecules; top 20 candidates had predicted redox potentials >1V higher than database molecules. | Established the inverse design paradigm: moving from desired property to candidate molecular structure. |

| Chen et al., 2023Nature Catalysis | Graph Neural Network (GNN) | Prediction of adsorption energies for *O, *OH, *OOH on high-entropy alloy surfaces. | Model achieved mean absolute error (MAE) of ~0.05 eV vs. DFT. Screened 20k candidates, identifying 6 promising alloys experimentally validated. | Enabled rapid exploration of vast, complex compositional spaces for multi-elemental catalytic interfaces. |

| Sambucci et al., 2022Anal. Chem. | 1D-CNN | Deconvolution of overlapping peaks in differential pulse voltammetry of pharmaceutical compounds. | Achieved >95% accuracy in quantifying individual components in mixtures, with concentration errors < 5%. | Provides a robust tool for analyzing complex, multi-analyte signals in drug development and bioanalysis. |

| Dave et al., 2021Cell Reports Phys. Sci. | Random Forest + Active Learning | Closed-loop optimization of an electrochemical DNA biosensor for specific sequence detection. | Improved signal-to-noise ratio by 300% within 30 autonomous experimental cycles. | Showcased adaptive optimization of a functionalized bio-electrochemical interface for enhanced sensitivity. |

Detailed Experimental Protocols

Protocol 3.1: Closed-Loop Optimization of an Electrocatalyst (Based on Luntz & Voss, 2019; Dave et al., 2021)

Objective: To autonomously optimize the composition of an electrocatalyst ink and/or electrochemical operating parameters to maximize a target performance metric (e.g., current density, selectivity, sensitivity).

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Initial Dataset Generation:

- Define the parameter space (e.g., catalyst loading (µg/cm²), binder ratio (%), Nafion content (%), applied potential (V vs. RHE), pH).

- Perform a space-filling design (e.g., Latin Hypercube Sampling) to select 10-20 initial experimental conditions.

- Execute experiments and measure the target performance metric (e.g., via chronoamperometry or cyclic voltammetry).

AI Model Setup:

- Employ a Gaussian Process (GP) regression model as a surrogate. The input is the parameter set, and the output is the performance metric.

- Define an acquisition function (e.g., Expected Improvement, EI) to quantify the potential benefit of sampling a new point.

Closed-Loop Operation:

- Prediction & Proposal: Train the GP model on all data collected so far. Use the acquisition function to identify the parameter set in the search space that maximizes EI.

- Automated Experimentation: The proposed parameters are sent to the automated potentiostat and liquid handling system (if applicable) to execute the experiment.

- Analysis & Iteration: The result is measured, added to the dataset, and the loop repeats from step 3a.

- Termination: Continue until a performance threshold is met, the improvement between cycles plateaus (e.g., <2% over 5 cycles), or a maximum cycle count (e.g., 50) is reached.

Validation: Perform triplicate experiments at the AI-proposed optimal conditions and compare against a traditionally optimized baseline.

Protocol 3.2: AI-Assisted Deconvolution of Voltammetric Peaks (Based on Sambucci et al., 2022)

Objective: To train a 1D-CNN to identify and quantify individual analytes from a composite voltammetric signal.

Materials: Potentiostat, standard solutions of pure target analytes, supporting electrolyte, blank solution.

Procedure:

- Training Data Acquisition:

- For each pure analyte (A, B, C...), record voltammograms (e.g., DPV or SWV) across a wide, relevant concentration range. Use consistent experimental parameters (step potential, pulse height, etc.).

- Record voltammograms for random mixtures of the analytes at various concentrations, ensuring the total dataset contains several thousand spectra.

- Pre-process all data: i) Background subtraction (using blank), ii) Normalization (e.g., to current range or area), iii) Interpolation to a common voltage axis.

Model Training:

- Structure a 1D-CNN with input layer size matching the number of data points in a voltammogram. Use convolutional layers to extract local features, followed by pooling and dense layers.

- The output layer should have n neurons for n analytes, providing the predicted concentration for each.

- Split data 70/15/15 for training, validation, and testing. Train the model using mean squared error loss and an Adam optimizer.

Deconvolution of Unknown Samples:

- Obtain the voltammogram of the unknown mixture under the same experimental conditions.

- Apply identical pre-processing steps as in Step 1.

- Input the processed voltammogram into the trained 1D-CNN model.

- The model outputs the predicted concentration for each analyte.

Calibration & Accuracy Check: Regularly validate model predictions against standard addition or HPLC-MS results for a subset of samples.

Visualizations

Title: Closed-Loop Autonomous Optimization Workflow

Title: Research Thesis Pillars and Applications

The Scientist's Toolkit

Table 2: Essential Research Reagents & Materials for AI-Augmented Electrochemistry

| Item | Function in AI-Augmented Experiments |

|---|---|

| Automated Potentiostat/Galvanostat | Core hardware for executing AI-proposed electrochemical protocols (CV, DPV, EIS) without manual intervention. Must have programmable API. |

| Robotic Liquid Handling System | Automates the preparation of electrolyte solutions, catalyst inks, or analyte mixtures with precise volumetric control, enabling high-throughput data generation. |

| High-Throughput Electrode Array | A multi-well or multi-channel electrochemical cell platform that allows parallel testing of multiple conditions, feeding large datasets to AI models. |

| Standard Redox Couples (e.g., K₃[Fe(CN)₆]/K₄[Fe(CN)₆]) | Used for validation and calibration of the electrochemical system, ensuring data quality and consistency for AI training. |

| Carbon/Platinum/Gold Working Electrodes | Versatile substrate electrodes for catalysis, sensing, and modification. Often the base for the interface being designed. |

| Nafion Binder Solution | A common ionomer used in catalyst ink formulation. Its ratio is a key optimization variable in catalyst layer design. |

| High-Purity Metal Salt Precursors | For the synthesis of tailored electrocatalysts (e.g., nanoparticles, alloys) proposed by generative AI models. |

| Pharmaceutical Analytic Standards | Pure compounds for generating training data in ML models aimed at drug detection and analysis in complex matrices. |

| Structured Electrochemical Database (e.g., EC-Data) | Curated datasets of published electrochemical properties for training and benchmarking predictive ML models. |

Building AI Pipelines for Smarter Biosensors and Drug Delivery Systems

Within AI-driven electrochemical interface design research, the integration of machine learning (ML) and automation is pivotal for accelerating the discovery and optimization of biosensing and drug delivery platforms. This protocol details an end-to-end workflow, from computational design to experimental validation, tailored for researchers and drug development professionals.

Core Workflow Protocol

Phase 1: Data Curation & Feature Engineering

Objective: Assemble a structured dataset for model training.

- Step 1.1 – Data Aggregation: Curate experimental data from public repositories (e.g., NIST, Materials Project) and in-house electrochemical characterization (Cyclic Voltammetry, Electrochemical Impedance Spectroscopy).

- Step 1.2 – Feature Calculation: Compute descriptors using cheminformatics (RDKit) and materials informatics (pymatgen) packages. Key descriptors include molecular weight, HOMO/LUMO energies, topological polar surface area, and computed electronic band gaps.

Table 1: Representative Feature Set for Interface Design

| Feature Category | Specific Descriptor | Typical Range | Relevance to Interface |

|---|---|---|---|

| Molecular | LogP (Partition Coefficient) | -2.0 to 8.0 | Predicts biocompatibility & membrane permeability |

| Electronic | HOMO Energy (eV) | -11.0 to -5.0 | Indicates electron-donating capability |

| Structural | Number of Rotatable Bonds | 0 to 15 | Impacts molecular flexibility & surface adhesion |

| Electrochemical | Calculated Redox Potential (V vs. SHE) | -1.5 to 1.5 | Predicts key electron transfer property |

Phase 2: Predictive Model Development

Objective: Train ML models to predict interface performance metrics (e.g., sensitivity, binding affinity, electron transfer rate).

- Step 2.1 – Model Selection: Implement a suite of algorithms: Random Forest (RF) for baseline, Gradient Boosting Machines (XGBoost), and Graph Neural Networks (GNNs) for structured molecular data.

- Step 2.2 – Hyperparameter Optimization: Use Bayesian Optimization (via scikit-optimize) or Grid Search to tune parameters over 50-100 iterations.

Table 2: Model Performance Comparison on Benchmark Dataset

| Model Type | MAE (Redox Potential) | R² (Sensitivity) | Training Time (min) | Key Hyperparameters Tuned |

|---|---|---|---|---|

| Random Forest | 0.18 V | 0.76 | 5.2 | nestimators=200, maxdepth=15 |

| XGBoost | 0.12 V | 0.85 | 8.7 | learningrate=0.05, maxdepth=10 |

| Graph Neural Network | 0.09 V | 0.91 | 42.5 | hiddenchannels=128, numlayers=4 |

Phase 3: Active Learning-Driven Design Loop

Objective: Iteratively refine model and propose optimal candidate materials.

- Step 3.1 – Candidate Generation: Use a genetic algorithm (GA) with a SMILES-based representation to generate novel molecular structures constrained by desired properties.

- Step 3.2 – Acquisition Function: Employ Upper Confidence Bound (UCB) or Expected Improvement (EI) to select candidates for in silico or in vitro testing, prioritizing high uncertainty and high predicted performance.

- Step 3.3 – Experimental Validation: Selected candidates proceed to synthesis and characterization (see Phase 4).

Phase 4: Experimental Validation Protocol

Protocol 4.1: Synthesis of AI-Designed Electroactive Interface

- Materials: See "The Scientist's Toolkit" below.

- Method:

- Clean gold electrode (2 mm diameter) via sequential sonication in acetone and ethanol for 5 minutes each. Rinse with DI water and dry under N₂ stream.

- Functionalize electrode by immersion in 2 mM solution of AI-predicted thiolated molecule in ethanol for 12 hours at 4°C.

- Rinse thoroughly with ethanol to remove physisorbed material.

- Characterize monolayer formation via Cyclic Voltammetry (CV) in 1 mM K₃Fe(CN)₆ / 0.1 M KCl solution at 100 mV/s scan rate. A reduction in peak current >70% indicates successful monolayer formation.

Protocol 4.2: Electrochemical Impedance Spectroscopy (EIS) for Affinity Measurement

- Method:

- Record EIS spectrum of functionalized electrode in PBS (pH 7.4) from 100 kHz to 0.1 Hz at formal potential, with 10 mV amplitude.

- Inject target analyte (e.g., protein, drug candidate) at concentrations from 1 pM to 100 nM.

- Fit Nyquist plots to a modified Randles circuit to extract charge transfer resistance (R_ct).

- Calculate binding affinity (Kd) by fitting ΔRct vs. concentration to a Langmuir isotherm model.

Visual Workflow & Pathway Diagrams

Title: End-to-End AI/ML Workflow for Electrochemical Interface Design

Title: Signaling Pathway at AI-Designed Electrochemical Interface

The Scientist's Toolkit

Table 3: Essential Research Reagents & Materials

| Item | Function/Description | Example Vendor/Cat. No. (if generic) |

|---|---|---|

| Gold Disk Working Electrodes (2 mm dia.) | Provides a clean, reproducible, and easily functionalizable surface for monolayer formation. | CH Instruments |

| Potassium Ferricyanide (K₃Fe(CN)₆) | Redox probe for characterizing electrode surface accessibility and monolayer quality via CV. | Sigma-Aldrich, 702587 |

| 6-Mercapto-1-hexanol (MCH) | A backfiller molecule used alongside designed receptors to reduce non-specific binding. | Sigma-Aldrich, 725226 |

| Phosphate Buffered Saline (PBS), 10x | Standard physiological buffer for EIS and binding affinity measurements. | Thermo Fisher, BP3991 |

| RDKit Software | Open-source cheminformatics toolkit for calculating molecular descriptors from structures. | rdkit.org |

| Autolab PGSTAT302N | Potentiostat/Galvanostat for performing CV, EIS, and other electrochemical experiments. | Metrohm |

| Custom Thiolated Molecules | AI-predicted receptor molecules synthesized with a thiol (-SH) terminus for Au-S binding. | Custom synthesis (e.g., Sigma Custom Synthesis) |

Application Notes

The integration of Density Functional Theory (DFT), Molecular Dynamics (MD) simulations, and Robotic/Automated laboratories creates a powerful closed-loop platform for AI-driven electrochemical interface design. This paradigm accelerates the discovery and optimization of materials for applications such as electrocatalysts for fuel cells, battery electrode interfaces, and biosensors. The core thesis is that this multi-fidelity data generation engine is essential for training robust, predictive AI models that can navigate the vast chemical and configuration space of electrochemical interfaces, ultimately guiding autonomous experimentation toward optimal designs.

1. Role in AI-Driven Electrochemical Research:

- DFT provides high-accuracy electronic structure data (e.g., adsorption energies, reaction barriers, density of states) for specific atomic configurations, forming the quantum-mechanical foundation.

- Classical/Machine Learning-Potential MD simulates the dynamical behavior of interfaces under realistic conditions (potential, solvent, temperature), revealing kinetics, stability, and collective phenomena.

- Robotic Labs execute the physical synthesis, characterization, and electrochemical testing (e.g., CV, EIS) of candidate materials identified by AI models trained on the simulation data, generating ground-truth validation.

2. Integrated Workflow for Catalyst Discovery: A representative workflow for oxygen reduction reaction (ORR) catalyst discovery involves: AI proposes a bimetallic alloy nanoparticle based on learned descriptors; DFT calculates the O* and OH* adsorption energies on numerous surface sites; a surrogate model predicts activity; ML-potential MD assesses nanoparticle stability under potential in aqueous electrolyte; the top candidate composition is sent to a robotic liquid handler for synthesis via automated co-precipitation; an automated fuel cell test station validates performance.

Experimental Protocols

Protocol 1: High-Throughput DFT Screening for Adsorption Energies

Objective: To compute the adsorption energy of key intermediates (e.g., H, O, OH, CO2) on a library of surface slabs.

Materials & Software:

- High-Performance Computing (HPC) Cluster

- DFT Software: VASP, Quantum ESPRESSO, GPAW

- Workflow Manager: FireWorks, AiiDA

- Structure Database: Materials Project, OQMD

Methodology:

- Surface Model Generation: For each bulk material, generate cleaved surface slabs (e.g., (111), (100)) using pymatgen or ASE. Create a 3x3 or larger supercell with ≥15 Å vacuum.

- Slab Optimization: Perform geometry optimization until forces on all atoms are <0.02 eV/Å. Fix the bottom 2-3 layers. Use PAW-PBE pseudopotentials, a plane-wave cutoff of 500 eV, and a k-point density of ~0.04 Å⁻¹.

- Adsorbate Placement: Use a site-matching algorithm to place the adsorbate on all unique high-symmetry sites (top, bridge, hollow).

- Adsorption Energy Calculation: For each adsorbate/site, optimize the structure. Compute adsorption energy: Eads = Eslab+adsorbate - Eslab - Eadsorbate(gas). E_adsorbate(gas) is computed in a large box.

- Data Logging: Output energies, geometries, Bader charges, and density of states into a structured database (e.g., MongoDB).

Protocol 2: ML-Potential Molecular Dynamics of Electrode-Electrolyte Interface

Objective: To simulate the structure and dynamics of an electrochemical double layer under applied potential.

Materials & Software:

- HPC Cluster

- MD Engine: LAMMPS, GROMACS

- ML Potential: Equivariant Neural Network (e.g., NequIP, Allegro) or Classical Force Field (e.g., INTERFACE, CFF).

- Potential Control: Computational Hydrogen Electrode (CHE) or explicit charged electrode method.

Methodology:

- System Construction: Build a simulation cell with the electrode slab (from DFT), explicit solvent (e.g., ~500 H2O molecules), and electrolyte ions (e.g., 0.1-1 M H+, OH-, Na+, Cl-). Use Packmol.

- Potential Initialization: Apply a surface charge density (σ) corresponding to the target electrode potential (U) via the relation from a constant-capacitance model or by adding/removing electrons in a DFT-MD context.

- Equilibration: Run an NVT simulation for 50-100 ps at 300 K using a thermostat (Nosé-Hoover) to equilibrate solvent and ions.

- Production Run: Perform an NVT simulation for 100-500 ps. For reactive processes, use enhanced sampling (metadynamics).

- Analysis: Compute the time-averaged electrostatic potential to determine the potential drop. Analyze radial distribution functions (RDFs), ion density profiles, and water orientation.

Protocol 3: Robotic Synthesis and Electrochemical Characterization of Thin-Film Catalysts

Objective: To autonomously synthesize compositionally graded thin-film catalysts and characterize their activity via cyclic voltammetry.

Materials & Equipment:

- Robotic Platform: High-throughput inkjet printer or automated pipetting system (e.g., Formulatrix Mantis, Opentron OT-2).

- Substrates: Glassy carbon or conductive oxide-coated slides.

- Precursor Solutions: 0.1 M metal salts (e.g., H2PtCl6, Co(NO3)2, NiCl2) in appropriate solvents.

- Automated Electrochemical Cell: Multi-channel potentiostat (e.g., Metrohm Autolab M204, Biologic VSP-300) integrated with a robotic sample handler.

Methodology:

- Ink Formulation: Robotically mix precursor solutions in a 96-well plate according to an AI-generated composition spreadsheet (e.g., PtxCoyNiz).

- Thin-Film Deposition: Using an inkjet printer, deposit micro-droplets of each ink onto predefined substrate spots. Alternatively, use robotic pipetting followed by spin-coating. Dry and calcine in a programmable furnace.

- Automated Electrochemical Setup: The robotic arm transfers each sample into a flow-cell or dip-cell with standard 3-electrode setup (Ag/AgCl reference, Pt counter).

- Cyclic Voltammetry Protocol: The potentiostat automatically executes:

- Electrolyte purging with N2 for 20 min.

- Activation: 50 cycles at 100 mV/s in N2-saturated 0.1 M HClO4.

- ORR Measurement: Record CVs from 0.05 V to 1.0 V vs. RHE at 10 mV/s in O2-saturated electrolyte.

- Data Extraction: Software automatically extracts metrics: electrochemical surface area (ECSA), half-wave potential (E1/2), and kinetic current density (jk) at 0.9 V vs. RHE, logging them to a master results file.

Data Tables

Table 1: DFT-Calculated Adsorption Energies for ORR Intermediates on Pt3Ni(111) Surfaces

| Surface Termination | Site | ΔE_H* (eV) | ΔE_O* (eV) | ΔE_OH* (eV) | Theoretical Overpotential (η, V) |

|---|---|---|---|---|---|

| Pt-skin | fcc | -0.32 | -1.05 | -0.68 | 0.30 |

| Pt-skin | hcp | -0.30 | -1.08 | -0.70 | 0.33 |

| Ni-skin | fcc | -0.45 | -1.95 | -1.20 | 0.85 |

| Pt-Ni mixed | bridge | -0.38 | -1.52 | -0.92 | 0.55 |

Table 2: Robotic Electrochemical Screening Results for Pt-Co-Ni Ternary Alloys

| Composition (Atomic %) | ECSA (m²/g) | E1/2 vs. RHE (V) | jk @ 0.9V (mA/cm²) | Mass Activity @ 0.9V (A/mgPt) |

|---|---|---|---|---|

| Pt75Co15Ni10 | 68.2 | 0.91 | 3.45 | 0.42 |

| Pt50Co30Ni20 | 55.7 | 0.89 | 2.98 | 0.38 |

| Pt70Co10Ni20 | 72.5 | 0.92 | 3.89 | 0.48 |

| Pt60Co20Ni20 | 61.3 | 0.90 | 3.21 | 0.40 |

| Commercial Pt/C | 78.0 | 0.86 | 1.05 | 0.22 |

Visualizations

AI-Driven Electrochemical Material Discovery Loop

Robotic Synthesis and Characterization Workflow

The Scientist's Toolkit: Key Research Reagent Solutions & Materials

Table 3: Essential Materials for Integrated Electrochemical Interface Research

| Item | Function/Description |

|---|---|

| VASP/Quantum ESPRESSO License | Software for performing ab initio DFT calculations to obtain electronic structure and energetics. |

| LAMMPS with PLUMED | Open-source MD simulator capable of integrating classical, reactive, and machine-learning potentials for interface dynamics. |

| ANI-2x or MACE ML Potential | Pre-trained machine learning interatomic potentials for fast, quantum-accurate MD simulations of organic/metal systems. |

| High-Throughput Computing Cluster | Essential for parallel execution of thousands of DFT and MD simulation jobs. |

| Automated Liquid Handling Robot (e.g., Opentron OT-2) | For precise, reproducible preparation of precursor libraries and electrochemical solutions. |

| Inkjet-Based Material Printer (e.g., SonoTek) | For depositing compositionally graded thin-film catalyst libraries onto substrate arrays. |

| Multi-Channel Potentiostat (e.g., Biologic VSP-300) | Enables simultaneous electrochemical characterization of multiple samples (CV, EIS). |

| Gas-Tight Electrochemical Flow Cell with Sample Changer | For automated, controlled-environment testing of catalyst activity under relevant gas feeds (O2, H2). |

| Standard Reference Electrodes (e.g., Ag/AgCl, RHE) | Essential for accurate potential control and reporting in electrochemical experiments. |

| High-Purity Metal Salt Precursors (e.g., PtCl4, Ni(NO3)2) | Source materials for synthesizing catalyst libraries. Must be ultra-pure to avoid contamination. |

| Deaerated High-Purity Electrolytes (e.g., 0.1 M HClO4, KOH) | Standard electrolytes for fuel cell and electrolyzer catalyst testing. |

| Structured Database System (e.g., MongoDB, PostgreSQL) | Central repository for all generated DFT, MD, robotic, and characterization data, tagged with metadata. |

| Workflow Management Software (e.g., AiiDA, FireWorks) | Automates and records the complex computational workflows, ensuring reproducibility and provenance tracking. |

Within the broader thesis on AI-driven electrochemical interface design research, feature engineering is the critical bridge between raw experimental/calculational data and predictive machine learning models. The selection of optimal descriptors—quantitative representations of material and surface properties—directly determines model performance for applications such as electrocatalyst discovery, battery material optimization, and biosensor design. This protocol outlines systematic methodologies for descriptor selection, validation, and implementation.

Core Descriptor Categories and Quantitative Data

Electrochemical descriptors are derived from computational, experimental, and compositional data. The following table summarizes key descriptor categories with examples and typical value ranges.

Table 1: Core Descriptor Categories for Electrochemical Materials

| Descriptor Category | Specific Examples | Typical Value Range | Data Source |

|---|---|---|---|

| Electronic Structure | d-band center (eV), Band gap (eV), Fermi energy (eV) | -5.0 to -1.0 eV (d-band), 0.0 - 10.0 eV (band gap) | DFT Calculation |

| Atomic/Geometric | Coordination number, Atomic radius (Å), Surface energy (J/m²) | 1 - 12 (CN), 0.5 - 3.0 Å (radius), 0.5 - 3.0 J/m² | DFT, XRD |

| Thermodynamic | Adsorption energy (eV), Formation energy (eV/atom), Solvation energy (eV) | -10.0 to 5.0 eV (adsorption) | DFT, Calorimetry |

| Experimental | Onset potential (V vs. RHE), Tafel slope (mV/dec), Exchange current density (A/cm²) | 0.2 - 1.5 V, 30 - 120 mV/dec, 10⁻¹² - 10⁻³ A/cm² | Cyclic Voltammetry |

| Compositional | Electronegativity (Pauling), Valence electron count, Atomic weight | 0.7 - 4.0 (Pauling), 1 - 12 | Periodic Table |

| Morphological | Particle size (nm), Porosity (%), Surface area (m²/g) | 1 - 100 nm, 0 - 80%, 1 - 1500 m²/g | BET, TEM, SEM |

Experimental Protocols for Descriptor Generation

Protocol 3.1: Density Functional Theory (DFT) Calculation for Electronic/Thermodynamic Descriptors

Objective: Compute ab initio descriptors like adsorption energy (ΔE*ads) and d-band center (εd). Materials: See "Scientist's Toolkit" (Section 7). Procedure:

- Structure Optimization: Build initial surface slab model (e.g., (111) facet for FCC metals). Use a vacuum layer >15 Å. Optimize geometry until forces on each atom are <0.01 eV/Å.

- Static Calculation: Perform a single-point energy calculation on the optimized clean slab. Record total energy (E*slab).

- Adsorbate Setup: Place adsorbate (e.g., *OH, *O, *H) at relevant surface sites (top, bridge, hollow).

- Adsorbate-Slab Calculation: Optimize the adsorbate-slab system. Record total energy (E*slab+ads).

- Reference Calculations: Calculate energy of the adsorbate molecule in gas phase (E*ads,g) using a large box.

- Descriptor Extraction:

- ΔEads = Eslab+ads - Eslab - Eads,g

- εd: Project the density of states onto the d-orbitals of the surface atoms and compute the first moment.

- Validation: Benchmark against known systems (e.g., Pt(111) for *OH adsorption ≈ 0.8 eV).

Protocol 3.2: Experimental Measurement of Kinetic Descriptors

Objective: Determine Tafel slope and exchange current density (j0) for an electrocatalytic reaction. Materials: Potentiostat, rotating disk electrode (RDE), catalyst ink, electrolyte (e.g., 0.1 M HClO4), counter electrode, reference electrode (RHE). Procedure:

- Electrode Preparation: Prepare catalyst ink (5 mg catalyst, 950 µL solvent, 50 µL Nafion). Deposit 10-20 µL onto polished RDE to form a thin film. Dry under ambient conditions.

- Polarization Curve Measurement: In a three-electrode cell, perform linear sweep voltammetry (LSV) at a slow scan rate (e.g., 5 mV/s) under rotation (1600 rpm) to achieve steady-state.

- IR Compensation: Apply positive feedback or current-interruption IR compensation.

- Tafel Analysis: Extract the overpotential (η) and corresponding current density (j) from the IR-corrected LSV in the low overpotential region (typically where η > 30 mV).

- Data Processing: Plot η vs. log|j|. The Tafel slope (b) is the linear fit slope: η = b log(j/j0). The exchange current density (j0) is the extrapolated current at η = 0 V.

- Descriptor Recording: Report b (mV/dec) and j0 (A/cm²geo or A/mg*cat).

Descriptor Selection and Validation Workflow

Diagram Title: Descriptor Selection and Model Training Workflow (AI-Driven Design)

Logical Framework for Descriptor-Activity Relationship Mapping

Diagram Title: From Descriptor to Device Performance Logic Chain

Case Study Protocol: ORR Catalyst Screening

Objective: Identify promising Pt-alloy catalysts for the Oxygen Reduction Reaction (ORR) using a minimal descriptor set. Step 1: Compute ΔGOH for a series of M@Pt(111) surface models (M = 3d transition metals) using Protocol 3.1. Step 2: Compute O₂ dissociation barrier or *O binding energy for a subset to validate scaling with ΔGOH. Step 3: Train a kernel ridge regression model using ΔGOH and elemental features (electronegativity, atomic radius) to predict overpotential. Step 4: Screen hypothetical surfaces by predicting their ΔGOH from surrogate models (e.g., graph neural networks). Step 5: Top candidates are synthesized and tested using Protocol 3.2 for validation.

Table 2: Example ORR Descriptor Data for Pt-alloy Surfaces (Hypothetical Data)

| Surface | d-band Center (eV) | ΔG*OH (eV) | Predicted η (V) | Measured j0 (mA/cm²) |

|---|---|---|---|---|

| Pt(111) | -2.75 | 0.80 | 0.30 | 1.0 |

| Ni@Pt(111) | -2.95 | 0.65 | 0.25 | 3.5 |

| Co@Pt(111) | -3.05 | 0.55 | 0.22 | 5.8 |

| Cu@Pt(111) | -3.20 | 0.40 | 0.28 | 2.1 |

The Scientist's Toolkit: Key Research Reagent Solutions & Materials

Table 3: Essential Materials for Electrochemical Feature Engineering

| Item | Function/Brief Explanation |

|---|---|

| VASP/Quantum ESPRESSO Software | First-principles DFT codes for calculating electronic structure descriptors. |

| Catalyst Ink Components (Isopropanol, Nafion ionomer) | Forms homogeneous catalyst layer on electrode for reproducible testing. |

| Standard Reference Electrodes (RHE, Ag/AgCl) | Provides stable potential reference for experimental descriptor measurement. |

| High-Purity Electrolytes (e.g., 0.1 M HClO₄, 1 M KOH) | Minimizes impurity effects on measured electrochemical responses. |

| Pt Counter Electrode | Provides a non-reactive, stable counter electrode in three-electrode cells. |

| Material Databases (Materials Project, NOMAD) | Source of pre-computed descriptors (band gaps, formation energies). |

| Python ML Stack (scikit-learn, matminer, pymatgen) | Libraries for descriptor manipulation, selection, and model building. |

| Rotating Ring-Disk Electrode (RRDE) | Allows simultaneous measurement of activity and selectivity descriptors. |

This application note details a specific case study within a broader thesis on AI-driven electrochemical interface design research. The primary aim is to demonstrate how machine learning (ML) accelerates the discovery and optimization of nanozymes—nanomaterials with enzyme-like catalytic activity—for use in sensitive, low-cost electrochemical point-of-care (POC) diagnostics. The integration of AI into the design loop fundamentally shifts the paradigm from sequential trial-and-error to predictive, high-throughput material screening, enabling the rational engineering of interfaces with tailored catalytic properties for target analytes.

Application Notes: AI-Driven Design Cycle for Peroxidase-Mimicking Nanozymes

This section outlines the integrated workflow for developing an AI-optimized nanozyme for the detection of a model cardiac biomarker, Cardiac Troponin I (cTnI).

AI/ML Model Training & Prediction

- Objective: To predict the peroxidase-like catalytic activity of metal-doped carbon nanozymes.

- Data Source: A curated database of published experimental results was assembled via a live search of recent literature (2022-2024). Key features included nanoparticle core composition (Fe, Co, Cu, etc.), doping element and percentage, surface functional groups, substrate type (H₂O₂, TMB), and resultant kinetic parameters (Michaelis constant Kₘ, maximum velocity Vₘₐₓ).

- Model Architecture: A Gradient Boosting Regressor (e.g., XGBoost) was employed to predict catalytic efficiency (Vₘₐₓ/Kₘ). The model was trained on ~80% of the data, with the remainder used for validation.

Table 1: Summary of Key Quantitative Data from Literature for Model Training

| Nanozyme Composition | Dopant (%) | Kₘ (H₂O₂) (mM) | Vₘₐₓ (H₂O₂) (10⁻⁸ M s⁻¹) | Catalytic Efficiency (Vₘₐₓ/Kₘ) (10⁻⁸ M s⁻¹ mM⁻¹) | Reference (Year) |

|---|---|---|---|---|---|

| Fe₃O₄ | N/A | 0.154 | 3.45 | 22.40 | Benchmark (2017) |

| N-doped C/Fe | 2.1% Fe | 0.098 | 9.87 | 100.71 | Nat. Commun. (2022) |

| Co–N–C | 1.8% Co | 0.081 | 12.05 | 148.77 | Anal. Chem. (2023) |

| Cu–SAs–N–C | 0.9% Cu | 0.120 | 8.24 | 68.67 | ACS Sens. (2023) |

| Fe/Co–N–C | 1.1% Fe, 0.7% Co | 0.065 | 14.33 | 220.46 | Adv. Mater. (2024) |

- Prediction Outcome: The trained model identified Fe/Co dual-doped, nitrogen-rich carbon frameworks as high-probability candidates for superior peroxidase-mimicking activity, specifically for oxidizing the chromogenic/electroactive substrate 3,3',5,5'-Tetramethylbenzidine (TMB) in the presence of H₂O₂.

Electrochemical Sensor Integration & Signaling

- Design: The predicted optimal nanozyme (Fe/Co–N–C) was synthesized and drop-casted onto a screen-printed carbon electrode (SPCE). The assay employs a sandwich immunoformat.

- Signaling Pathway: The target analyte (cTnI) is captured between a capture antibody on the SPCE and a detection antibody conjugated to the Fe/Co–N–C nanozyme. The nanozyme catalyzes the oxidation of TMB by H₂O₂, generating an electroactive product (oxTMB). The subsequent electrochemical reduction current of oxTMB is measured via amperometry, providing a quantifiable signal proportional to cTnI concentration.

Diagram 1: Electrochemical Nanozyme Signaling Pathway (99 chars)

Experimental Validation & Performance

The AI-predicted nanozyme-based sensor was fabricated and tested. Performance metrics were compared against a control nanozyme (Fe₃O₄).

Table 2: Performance Comparison of AI-Optimized vs. Standard Nanozyme Sensor

| Parameter | AI-Optimized Fe/Co–N–C Sensor | Conventional Fe₃O₄ Nanozyme Sensor |

|---|---|---|

| Detection Principle | Amperometry (oxTMB reduction) | Amperometry (oxTMB reduction) |

| Target Analyte | Cardiac Troponin I (cTnI) | Cardiac Troponin I (cTnI) |

| Linear Range | 0.01 – 100 ng mL⁻¹ | 0.1 – 50 ng mL⁻¹ |

| Limit of Detection (LOD) | 2.8 pg mL⁻¹ | 35 pg mL⁻¹ |

| Assay Time | 22 minutes | 35 minutes |

| Signal-to-Noise Ratio | 48.5 | 12.2 |

| % Recovery in Spiked Serum | 97.5% – 102.8% | 92.1% – 108.5% |

Experimental Protocols

Protocol A: Synthesis of AI-Predicted Fe/Co–N–C Nanozyme

- Objective: To synthesize the dual-metal doped carbon nanozyme.

- Materials: See "The Scientist's Toolkit" below.

- Procedure:

- Dissolve 2.0 g of melamine, 200 mg of iron(III) chloride hexahydrate, and 150 mg of cobalt(II) acetate tetrahydrate in 40 mL of deionized water. Sonicate for 30 min.

- Freeze-dry the mixture for 48 hours to obtain a homogeneous precursor powder.

- Place the powder in a quartz boat and pyrolyze in a tube furnace under a continuous N₂ flow (100 sccm). Heat to 900°C at a ramp rate of 5°C min⁻¹ and hold for 2 hours.

- Allow the furnace to cool naturally to room temperature under N₂.

- Grind the resulting black solid into a fine powder. Wash sequentially with 0.5 M H₂SO₄ and ethanol, then centrifuge (12,000 rpm, 10 min) after each wash. Dry overnight at 60°C.

- Characterize using TEM, XPS, and XRD to confirm morphology and doping.

Protocol B: Fabrication and Testing of the Electrochemical POC Sensor

- Objective: To construct the immunoassay and perform amperometric detection.

- Materials: See "The Scientist's Toolkit."

- Procedure:

- Electrode Preparation: Activate the working electrode area of SPCEs with 5 µL of EDC/NHS mixture (1:1 molar ratio) for 1 hour. Wash with PBS (pH 7.4).

- Capture Antibody Immobilization: Drop-cast 10 µL of anti-cTnI capture antibody (10 µg mL⁻¹ in PBS) onto the activated SPCE. Incubate for 12 hours at 4°C in a humid chamber.

- Blocking: Apply 15 µL of 1% BSA in PBS for 1 hour at room temperature to block non-specific sites. Wash thoroughly with PBS containing 0.05% Tween 20 (PBST).

- Immunoassay Execution: Apply 10 µL of cTnI standard/sample to the SPCE for 15 min. Wash. Apply 10 µL of detection antibody-conjugated Fe/Co–N–C nanozyme (0.5 mg mL⁻¹) for 15 min. Wash.

- Electrochemical Measurement: Add 50 µL of freshly prepared assay buffer containing 0.5 mM TMB and 0.5 mM H₂O₂ to the SPCE cell.

- Immediately perform amperometric measurement at a constant potential of -0.1 V vs. the onboard Ag/AgCl reference for 300 seconds using a portable potentiostat.

- Record the steady-state reduction current. Plot current vs. log(cTnI concentration) to generate the calibration curve.

Diagram 2: Electrochemical Sensor Fabrication Workflow (69 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-Optimized Nanozyme POC Development

| Item / Reagent | Function / Role in Protocol |

|---|---|

| Screen-Printed Carbon Electrodes (SPCE) | Low-cost, disposable electrochemical cell for POC testing. Provides a stable substrate for antibody immobilization. |

| Melamine | Nitrogen-rich precursor for creating N-doped carbon frameworks during pyrolysis. |

| Iron(III) Chloride & Cobalt(II) Acetate | Metal precursors for generating the dual-doped (Fe/Co) catalytic centers within the nanozyme. |

| Anti-cTnI Antibodies (Pair) | Capture and detection antibodies for the specific, sandwich-based immunoassay. |

| EDC & NHS | Crosslinking agents for covalent immobilization of capture antibodies onto the activated SPCE surface. |

| Bovine Serum Albumin (BSA) | Blocking agent to minimize non-specific binding on the sensor surface, improving specificity. |

| 3,3',5,5'-Tetramethylbenzidine (TMB) | Chromogenic/electroactive peroxidase substrate. Its oxidized form (oxTMB) is electrochemically reduced to generate the analytical signal. |

| Hydrogen Peroxide (H₂O₂) | Co-substrate for the peroxidase-mimicking nanozyme reaction. |

| Portable Potentiostat | Essential instrument for applying potential and measuring the resulting electrochemical current in a field-deployable setting. |

This application note details the protocols and methodologies for developing AI-driven predictive models of drug release from conductive polymer coatings, a cornerstone of advanced electrochemical interface design for implantable drug delivery systems. This work is situated within a broader thesis on leveraging artificial intelligence to design, optimize, and control smart bioelectronic therapeutic interfaces.

Drug release kinetics from conductive polymers like poly(3,4-ethylenedioxythiophene) (PEDOT) are governed by electrochemical redox reactions. Applying a voltage induces ion influx/efflux to balance charge, which entrains the release of incorporated drug anions. Key parameters influencing release profiles are summarized below.

Table 1: Key Parameters Influencing Drug Release from Conductive Polymer Coatings

| Parameter | Typical Range/Type | Impact on Release Kinetics |

|---|---|---|

| Applied Potential | -1.0 V to +0.8 V (vs. Ag/AgCl) | Magnitude & polarity control release rate & mechanism (cationic vs. anionic). |

| Pulse Profile | Constant, Pulsed, Cyclic | Pulsing can enhance efficiency, reduce fouling, and enable complex profiles. |

| Polymer Thickness | 100 nm - 10 µm | Affects drug loading capacity and ion transport/diffusion time. |

| Drug Properties | Molecular Weight, Charge | Larger/heavier anions release more slowly; drug-polymer interaction is key. |

| Electrolyte | PBS, NaCl, etc. | Concentration and ion size influence switching speed and charge balance. |

Table 2: Sample Experimental Release Data for PEDOT/Dexamethasone Phosphate

| Time (min) | Cumulative Release (µg/cm²) @ -0.8V | Cumulative Release (µg/cm²) @ +0.6V |

|---|---|---|

| 5 | 1.2 ± 0.3 | 0.1 ± 0.05 |

| 15 | 4.5 ± 0.7 | 0.4 ± 0.1 |

| 30 | 8.9 ± 1.1 | 0.9 ± 0.2 |

| 60 | 12.3 ± 1.5 | 1.5 ± 0.3 |

Experimental Protocols

Protocol 1: Electrodeposition of Drug-Loaded PEDOT Coatings

Objective: To synthesize a uniform, drug-incorporated conductive polymer film on a platinum or gold electrode. Materials: See "The Scientist's Toolkit" below. Procedure:

- Clean the working electrode (e.g., Pt disk) sequentially with alumina slurry (1.0, 0.3 µm), sonicate in deionized water, and dry.

- Prepare an aqueous electrodeposition solution containing 0.01M EDOT monomer and 0.01M of the target drug (e.g., dexamethasone phosphate).

- Using a standard three-electrode cell (Pt counter, Ag/AgCl reference), perform potentiostatic deposition at +0.9 V vs. Ag/AgCl for 100-200 seconds under gentle stirring.

- Monitor charge passed (target: 50-200 mC). Rinse the coated electrode thoroughly with DI water to remove adsorbed monomers.

Protocol 2: In Vitro Drug Release Kinetics Measurement

Objective: To quantify electrochemically triggered drug release in a physiologically relevant buffer. Procedure:

- Place the coated electrode in a custom Franz-type diffusion cell or a small-volume electrochemical cell containing 5-10 mL of phosphate-buffered saline (PBS, pH 7.4) at 37°C.

- Apply a pre-determined electrochemical stimulus (e.g., a series of 10x -0.8 V pulses, 60 s on / 60 s off).

- At each time point, withdraw 200 µL of release medium for analysis and replace with fresh, pre-warmed PBS.

- Quantify drug concentration using High-Performance Liquid Chromatography (HPLC) or UV-Vis spectroscopy calibrated with standard solutions.

- Perform control experiments (open circuit) to measure passive diffusion.

Protocol 3: Data Acquisition for AI Model Training

Objective: To generate a high-quality dataset linking input parameters to release output for machine learning. Procedure:

- Define Input Feature Space: Systematically vary key parameters: applied voltage magnitude, waveform (square, cyclic), frequency, polymer thickness (via deposition charge), and electrolyte concentration.

- Automated Experimentation: Use a programmable potentiostat (e.g., Autolab, Biologic) to run hundreds of release experiments with different parameter combinations, recording the full current transient.

- Output Quantification: For each run, measure cumulative release at multiple time points via automated online UV-Vis flow cell or collect fractions for offline analysis.

- Curate Dataset: Assemble data into a structured table where each row is an experiment, columns are input features, and target variables are release amounts at times T1, T2,...Tn.

AI Model Development Workflow

This diagram outlines the pipeline for creating a predictive model of drug release.

Title: AI Model Development for Drug Release Prediction

Electrochemical Drug Release Mechanism

This diagram illustrates the primary signaling pathway for anionic drug release from a conductive polymer.

Title: Mechanism of Anionic Drug Release from Conductive Polymer

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Conductive Polymer Drug Release Studies

| Item | Function & Importance |

|---|---|

| EDOT (3,4-Ethylenedioxythiophene) Monomer | Precursor for PEDOT electrodeposition; purity is critical for film quality. |

| Pharmaceutical Anions (e.g., Dexamethasone Phosphate, Naproxen) | Model drug molecules for loading and release studies. |

| Phosphate Buffered Saline (PBS), 0.01M | Standard physiological electrolyte for in vitro release studies. |

| Lithium Perchlorate (LiClO₄) | Common supporting electrolyte for electrodeposition. |

| Ag/AgCl Reference Electrode | Provides a stable, known potential for all electrochemical experiments. |

| Platinum Counter Electrode | Inert electrode to complete the circuit during deposition and release. |

| Programmable Potentiostat/Galvanostat | Instrument to apply precise voltage/current waveforms and record electrochemical data. |

| Online UV-Vis Spectrophotometer with Flow Cell | Enables real-time, automated quantification of released drug during experiments. |

Overcoming Pitfalls: Best Practices for Optimizing AI Models in Electrochemistry

In the pursuit of accelerated materials and drug discovery, AI-driven models for electrochemical interface design promise to predict properties like adsorption energies, reaction pathways, and charge transfer efficiencies. However, three interconnected failure modes critically hinder their real-world application: Overfitting, where models learn noise and spurious correlations from limited training data; Poor Generalization, where models fail on novel electrode compositions or electrolyte conditions not seen during training; and Physically Unsound Predictions, where model outputs violate fundamental laws of electrochemistry or thermodynamics. This document outlines protocols to diagnose, mitigate, and validate against these failures.

Table 1: Common Performance Metrics and Their Implications for Failure Modes

| Metric | Typical Target | Indication of Overfitting | Indication of Poor Generalization | Note on Physical Soundness |

|---|---|---|---|---|

| Training RMSE (eV/adsorbate) | < 0.05 eV | Very low (< 0.01 eV) | Not applicable | Low error does not guarantee physical laws are obeyed. |

| Test/Validation RMSE (eV/adsorbate) | < 0.10 eV | Significantly higher than Training RMSE (e.g., >2x) | High (> 0.15 eV) on external benchmarks | |

| Mean Absolute Error (MAE) | < 0.08 eV | Similar pattern to RMSE | Similar pattern to RMSE | |

| R² (Coefficient of Determination) | > 0.9 | ~1.0 on training, << 0.9 on test | < 0.7 on novel chemical space | Can be high even for physically inconsistent predictions. |

| Out-of-Distribution (OOD) Error | As low as possible | N/A | Primary metric. High error on novel compositions/conditions. | |

| ΔG Prediction vs. Potential Slope | Nernstian (59 mV/dec at 298K) | N/A | N/A | Critical check. Deviation from theoretical slope indicates physical unsoundness. |

| Energy Conservation Violation | 0 eV | N/A | N/A | Non-zero energy in fictitious reaction cycles (e.g., adsorbate A->B->C->A). |

Table 2: Recent Benchmark Data from Literature (Summarized)

| Model Architecture | Training Data (Density Functional Theory - DFT) | Test RMSE (eV) | OOD Test RMSE (eV) | Reported Physical Constraint Incorporation |

|---|---|---|---|---|

| Graph Neural Network (GNN) | ~20k adsorption energies | 0.08 | 0.23 (on alloys) | No |

| SchNet | ~15k molecular intermediates | 0.09 | 0.31 (on new electrolytes) | No |

| Gradient-Domain ML (GDML) | ~5k reaction pathways | 0.05 | 0.18 | Yes (energy conservation) |

| Physics-Informed Neural Net (PINN) | ~10k PDE solutions | 0.11 | 0.15 | Yes (Poisson-Nernst-Planck equations) |

Experimental Protocols for Validation & Mitigation

Protocol 3.1: Rigorous Train-Validation-Test Split for Electrochemical Data Objective: To properly assess generalization and detect overfitting. Method:

- Data Curation: Assemble a dataset of labeled electrochemical properties (e.g., adsorption energy, reaction barrier) from DFT or experimental sources.

- Stratified Splitting: Do not split randomly. Split by:

- Training Set (70%): Contains specific electrode materials (e.g., Pt, Au, Cu) and a set of adsorbates (e.g., *OH, *O, *COOH).

- Validation Set (15%): Contains the same materials as training but held-out adsorbates (e.g., *OCH3, *NH2). Tests "interpolative" generalization.

- Test Set (15%): Contains completely held-out electrode materials (e.g., Pd, Ag) or electrolyte conditions (e.g., different pH, solvent). Tests "extrapolative" generalization (OOD).

- Monitoring: Track metrics from Table 1 on all three sets throughout training. Stop training when validation error plateaus or increases (early stopping).

Protocol 3.2: Testing for Physically Unsound Predictions Objective: To ensure model predictions obey thermodynamic and electrochemical laws. Method:

- Nernstian Response Test:

- Use the trained model to predict the free energy (ΔG) of a redox reaction intermediate (e.g., *OH formation) across a range of applied potentials (U).

- Plot ΔG vs. U for a given electron-transfer step. The slope should be -ne (where n is electrons transferred), approximating -59 mV/decade at 300K for a 1e- process.

- A statistically significant deviation indicates the model has learned spurious correlations instead of the underlying physical relationship.

- Cycle Closure Test:

- Define a closed cycle of reactions (e.g., *A + B -> *AB, *AB -> *C + D, *C + D -> *A + B). The sum of predicted ΔG values around the cycle should be zero.

- Calculate the cycle closure error (CCE). A mean CCE > 0.05 eV suggests violation of energy conservation.

Protocol 3.3: Incorporating Physics-Based Constraints (Regularization) Objective: To mitigate overfitting and improve physical soundness. Method:

- Loss Function Modification: Augment the standard Mean Squared Error (MSE) loss (L_data) with physics-based penalty terms.

L_total = L_data + λ1 * L_physics + λ2 * L_regularization - Physics Loss (L_physics): For Protocol 3.2 tests, define:

L_Nernst = MSE(Slope(ΔG vs. U), -ne)L_cycle = (Σ ΔG_cycle)²